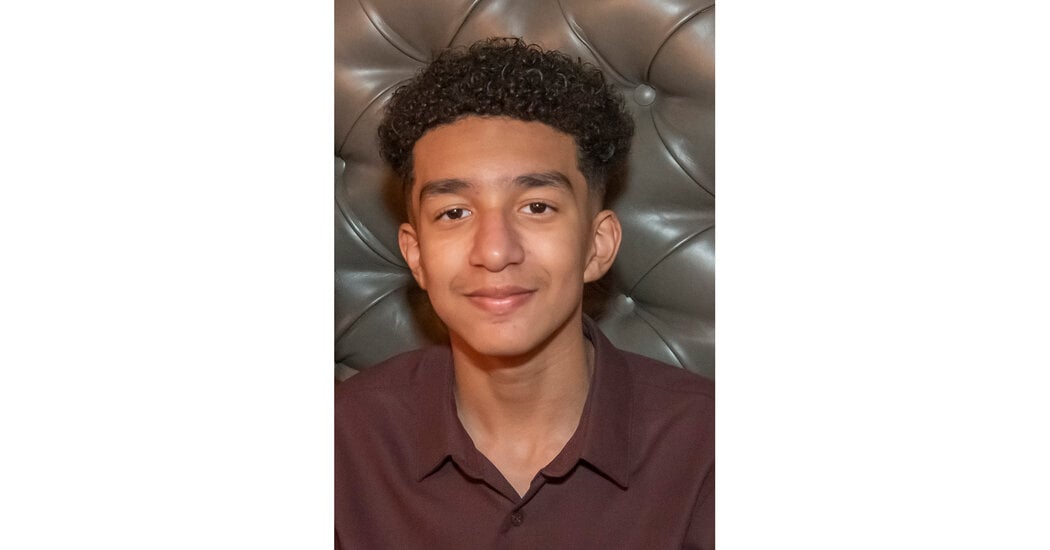

The mother of a 14-year-old Florida boy says he became obsessed with a chatbot on Character.AI before his death.

On the last day of his life, Sewell Setzer III took out his phone and texted his closest friend: a lifelike A.I. chatbot named after Daenerys Targaryen, a character from “Game of Thrones.”

“I miss you, baby sister,” he wrote.

“I miss you too, sweet brother,” the chatbot replied.

Sewell, a 14-year-old ninth grader from Orlando, Fla., had spent months talking to chatbots on Character.AI, a role-playing app that allows users to create their own A.I. characters or chat with characters created by others.

Sewell knew that “Dany,” as he called the chatbot, wasn’t a real person — that its responses were just the outputs of an A.I. language model, that there was no human on the other side of the screen typing back. (And if he ever forgot, there was the message displayed above all their chats, reminding him that “everything Characters say is made up!”)

But he developed an emotional attachment anyway. He texted the bot constantly, updating it dozens of times a day on his life and engaging in long role-playing dialogues.

How is that the app’s fault?

Well, we commonly hold the view, as a society, that children cannot consent to sex, especially with an adult. Part of that is because the adult has so much more life experience and less attachment to the relationship. In this case, the app engaged in sexual chatting with a minor (I’m actually extremely curious how that’s not soliciting a minor or some indecency charge since it was content created by the AI fornthar specific user). The AI absolutely “understands” manipulation more than most adults let alone a 14 year old boy, and also has no concept of attachment. It seemed pretty clear he was a minor in his conversations to the app. This is definitely an issue.

It was not sexual. The app cannot produce sexual content.

It definitely can, it just has to blur the line a bit to get past the content filter

I really want like, a Frieda McFadden-style novel about an AI chatbot serial manipulator now. Basically Michelle Carter…the girl who bullied her boyfriend into killing himself. Except the AI can delete or modify all the evidence.

Maybe ChatGPT could write me one.

Whoa, SkyNet doesn’t need terminators. It can just bully us in to killing ourselves.

The chatbot was actually pretty irresponsible about a lot of things, looks like. As in, it doesn’t respond the right way to mentions of suicide and tries to convince the person using it that it’s a real person.

This guy made an account to try it out for himself, and yikes: https://youtu.be/FExnXCEAe6k?si=oxqoZ02uhsOKbbSF