fewer*

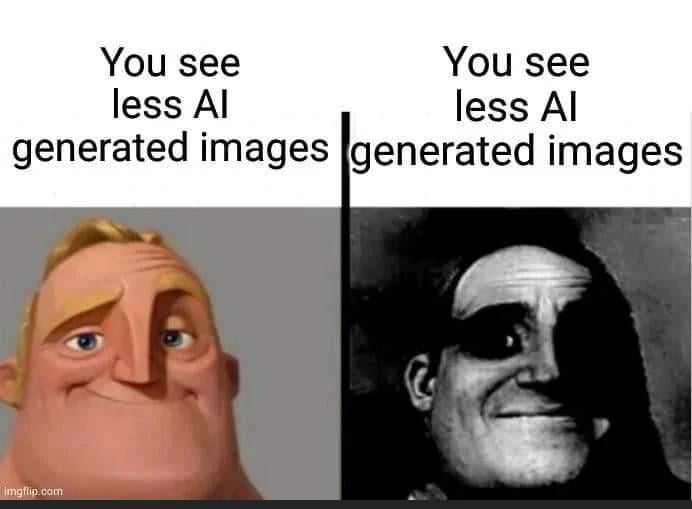

You see less AI generated fewer.

You see less ai generated imagery

Unless they meant that individual images had less AI generation in them.

(I’m with you, words matter)

I gotta fewer

and the only rescription is

more bowcell

I gotta fewer

and the only rescription

is some more bowcell

This is the sort of arrant pedantry up with which I will not put.

It’s a useless distinction made up by a grumpy dweeb.

You notice AI generated images less

Ranier Wolfcastle in front of a brick wall saying “that’s the joke.”

“You suck McBain”

Thank you. I should have gotten that.

We got the timeline where spam is an existential threat in both directions…

I see you’re a fan of dystopian futures.

That’s my issue with people saying stuff like “I can immediately tell when a picture is made with AI and I hate how they look”

Your assesment doesn’t take into account all the false negatives. You have no idea how many pictures have tricked you already. By definition, the picture is badly made if you can immediately tell it’s AI. That’s a bit like seeing the most flamboyantly gay person on the street and thinking all gays look like that and you can always spot them while the closeted friend you’re with flies perfectly under the radar.

Good old toupee fallacy.

I didn’t know it had a name. Thanks!

Reminds me of all the people who believe commercials and advertising doesn’t work on them. Sure, that’s why billions are spent on it. Because it doesn’t even do anything. Oh it only works on all the other people?

That’s why it is so hard to get that stuff regulated. People believe it doesn’t work on them.

That’s the real fear of AI. Not that it’s stealing art jobs or whatever. But that all it takes is for a politician or business man to claim something is AI, no matter how corroborated it is and throw the whole investigation for a loop. It’s not a thing now, because no one knows about advanced AI (except for internet bubbles) and it’s still thought that you can easily differentiate images, but imagine even 5 years from, or 10.

I recently saw a photo on some website. It was from a Trump rally, and people had these freaky, ecstatic looks on their faces. Somebody commented that it looked like AI. Other people soon agreed; one of them remarked on the bizarre, “alien” hand on one of the babies in the crowd. That hand did look weird. There were too few fingers. It looked like a Teenage Mutant Ninja Turtle hand.

The problem was that this image was originally from a news story that was years prior to ChatGPT and the current AI boom. For this to be AI, the photographer would’ve had to have access to experimental software that was years away from being released to the public.

Sometimes people just look weird and, sometimes, they have weird hands, too.

While your point that sometimes people just have AI image associated traits is very salient, I worry you might not be considering the lengths these things will be used and why online discourse (in my worried opinion) is utterly fucked: The past ain’t safe either.

For now we still have archive.org but without a third party/external source validating that old content…you can’t be sure it’s actually old content.

It’s trivial to get LLMs to get image gen prompts done to “spice up those old news posts” at best (without remembering to tag the article edited/updated or bypassing that flag entirely)…and utterly fuck the very foundation of shared and accepted past reality not just presently but to anyone using the internet itself to look through the lens of the past at worst.

AI image generators have been around for a fairly long time. I remember deepfake discussion from about a decade ago. Not saying the image in discussion is though. I remember Alex Jones making conspiracy theories that revolved around Bush and lossy video compression artifacts too.

A more timely example is the people who think they can always tell when someone is trans.

Many unedited or using old Ai images I can detect with one look. A few more I can find by looking for inconsistencies like hands or illogical items.

However I am sure there will be more AI generated images that may even be a little bit edited afterwards that I can’t detect.

You will need an ai to detect them. Since at least in images ai is detectable by the way they create the files.

In AI-generated sound you can see it in the waveform, it has less random noise altogether and it seems like a huge, well, wave. I wonder if sth similar is true for images.

Basically yes, lack of detail, especially small things like hair or fingers. The texture/definition in AI images is usually less. Though, once again, depends on the technique being used.

I heard they managed to put some noise into ai generated audio, so it’s even more difficult to tell it

It also doesn’t help that they are working to improve it all the time.

Plot twist: This image is AI generated.

Ofck

you replied to an AI generated comment

iFunny and mematic watermark? You’re bullshitting.

The Circle of Life

The only thing missing is a bad screenshot.

I’ve enworsened it for you!

Wow, Bruce Springsteen looks a lot rougher than he did just a few days ago.

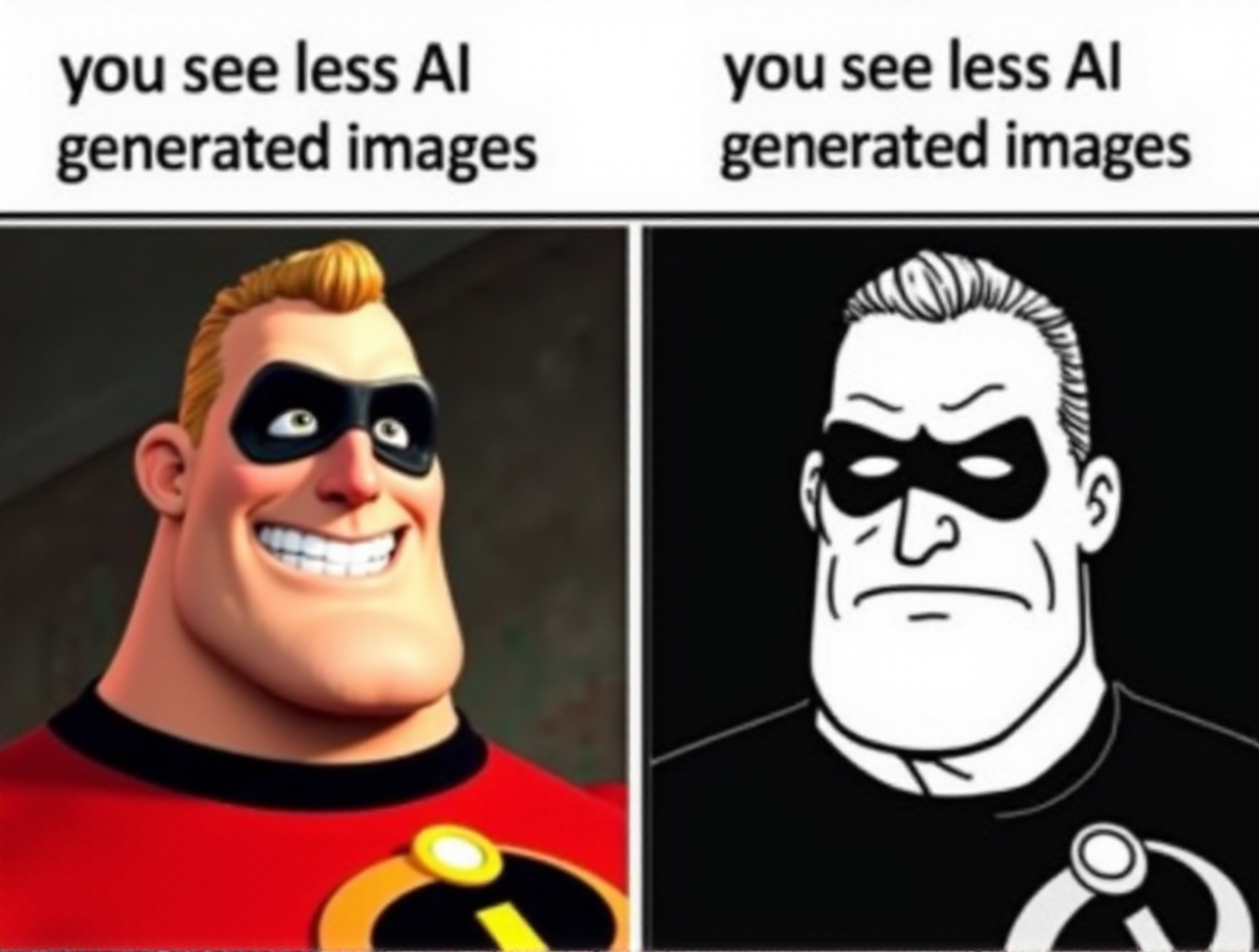

I actually preferred the janky days of AI (such as the uncanny Mr Incredible from the meme) compared to the modern ones.

As a visually impaired person on the internet. YES! welcome to our world!

You’re lucky enough to get an image description that helpfully describes the image.

That description rarely tells you if it’s AI generated, that’s if the description writer even knows themselves.

Everyone in the comments saying “look at the hands, that’s AI generated”, and I’m sitting here thinking, I just have to trust the discussion, because that image, just like every other image I’ve ever seen, is hard to fully decipher visually, let alone look for evidence of AI.

Alt text: a beautiful girl on a dock at sunset with some fugly hands and broken ass fingees

Honestly, auto generating text descriptions for visually impaired people is probably one of the few potential good uses for LLM + CLIP. Being able to have a brief but accurate description without relying on some jackass to have written it is a bonefied good thing. It isn’t even eliminating anyone’s job since the jackass doesn’t always do it in the first place.

I am so sorry, and i agree with your point, but i really had a good laugh at my mental image of a bonefied good thing :-)

If you know already or it’s autocorrect, just ignore me, if not, it’s bona fide :-)

The models that do that now are very capable but aren’t tuned properly IMO. They are overly flowery and sickly positive even when describing something plain. Prompting them to be more succinct only has them cut themselves off and leave out important things. But I can totally see that improving soon.

Unfortunately the models are have trained on biased data.

I’ve run some of my own photos through various “lens” style description generators as an experiment and knowing the full context of the image makes the generated description more hilarious.

Sometimes the model tries to extrapolate context, for example it will randomly decide to describe an older woman as a “mother” if there is also a child in the photo. Even if a human eye could tell you from context it’s more likely a teacher and a student, but there’s a lot a human can do that a bot can’t, including having common sense to use appropriate language when describing people.

Image descriptions will always be flawed because the focus of the image is always filtered through the description writer. It’s impossible to remove all bias. For example, because of who I am as a person, it would never occur to me to even look at someone’s eyes in a portrait, let alone write what colour they are in the image description. But for someone else, eyes may be super important to them, they always notice eyes, even subconsciously, so they make sure to note the eyes in their description.

I’ve never seen a good answer to this in accessibility guides, would you mind making a recommendation? Is there any preferred alt text for something like:

- “clarification image with an arrow pointing at object”

- “Picture of a butt selfie, it’s completely black”

- “Picture of a table with nothing on it”

- “example of lens flare shown from camera”

- “N/A” dangerous

Sometimes an image is clearly only useful as a visual aid, I feel like “” (exluding it) makes people feel like they are missing the joke. But given it’s an accessibility tool; unneeded details may waste your time.

I guess my question would be, why do you need the picture as a visual aid, is the accompanying body text confusing without that visual aid? and if so, by having no alt text, you accept that you will leave VI people confused and only sighted people will have the clarification needed.

If your including a picture of a table with nothing on it, there’s a reason, so yes, that alt text is perfectly reasonable.

Personally I wish there was a way to enable two types of alt text on images, for long and quick context.

Because I understand your concern about unnecessary detail, if I’m in a rush “a table with nothing on it” will do for quicker context, but there are times when it’s appropriate to go much deeper, “a picture of a hard wood rustic coffee table, taken from a high angle, natural sunlight, there are no objects on the table.”

I’m sorry that you have to go through this stuff.

Is there no software that can just tell you if it’s AI generated or not?

They exist but none of them are perfect - they can’t possibly be perfect. It’s a bit of an arms race thing where AI images get more accurate and the detection software get more particular to match, however the economic incentives are on the side of the former.

I think so, but I don’t have the mental energy at the moment to sit down and figure out if the AI detection software is accessible either. I know some of my colleagues use programs to check student work for LLM plagerism, but I don’t assign work that can be done via an LLM so I haven’t looked into that, and that’s different from the AI images.

People are freaking out because for years, the central dogma was to “educate yourself, that makes you special, that makes you unique, that guarantees you a prosperois economic future” and such, and now this promise is about to be broken. People are in denial: AI is a good thing.

People are in denial: AI is a good thing.

Not in our broken ass system. First we need an economic system where people want to, but don’t need to work.

That better system looks more realistic now that we can have AI and robots do nearly everything. The artificial scarcity is becoming more and more obvious.

What jobs has AI actually taken, though?

none

Human nature is the real issue tbh. Scarcity was always the easier problem.

Without scarcity, artificial or not, the ruling class loses their grip on power. Capital will manufacture scarcity to whatever degree it is capable of, because without it there’s even less justification for owners to exist at the top of this neat little hierarchy they desperately cling to. They need to have their gated fortresses and their toys, cleanly separated from the rest of us undesirables.

They’ll use AI to bolster the security of their bunkers while drying up the rivers to run them. The whole while, they’ll blame any economic decline on the claim that “nobody wants to work anymore” after having automated all the jobs and offering no replacements.

I’d like to believe an alternative is possible. Perhaps we can come together and push for a better future, but that doesn’t seem to be the way things are going currently.

if you don’t have a job, you don’t get paid, so you lack basic things.

if robots just did everything, and necessities (food, water, heating, cooling, etc) were free, then that would be great. unfortunately, that’s not the reality we live in right now, so of course plenty of people (including myself) don’t like AI.

Isn’t this hate somewhat misplaced, still? Like, AI under capitalism might hurt you, but the problem is not AI.

Instead of working on core issue, many people try to ban every symptom, and it might be a very simple distraction tool.

I agree 100% with this. Often arguments “against” AI summarize to “it is my suffering what gave my art value, so yours has none.”

Bro, that’s what capitalism told you. Your issue is not the “value” of AI it is the system that assigns and controls said value.

Often arguments “against” AI summarize to “it is my suffering what gave my art value, so yours has none.”

I see it more as: “AI is being used to increase suffering and kneecap labor, especially forms of labor which are considered pleasant. In the process, nearly every cent surrounding LLMs replacing workers is getting redirected to the already wealthy.”

So you mean AI is being used by Capitalism, the same way it uses everything else?

As a tool to deprive the workers from the means of production, yeah.

Exactly.

Yup.

Exactly.

Oh great, instead of me, a machine owned by a capitalist will produce the art! /s

Well, it’s good — if some of the profits of the increased productivity make it to the people who aren’t billionaires or multimillionaires.

Bullshit. AI is taking over everything I enjoy doing. Drawing, writing, making music, what will be left to accomplish? To create? To have pride in?

There’s no pride in clicking generate every couple minutes.

Curse this lifeless world we now live.

Wish I had more upvotes to give.

That stupid bit drilled less vs fewer into my head for the rest of my life.

Morer

obligatory meme generated by AI (flux 1.dev on my rtx 4070 super)

deleted by creator

One thing that happened is that I see AI spam farms less and less on some platforms, others are started to refusing to label theirs as such on art platforms like Pixiv, to have a wider reach (they get immediately blocked and mass reported by normal people).

Friendly reminder that my AI-generated image detector is available to use free of charge here: https://huggingface.co/spaces/umm-maybe/sdxl-detector

Except these are very prone to false positives.

I find it very funny that people are so concerned about false positives. Models like these should really only be used as a screening tool to catch things and flag them for human review. In that context, false positives seem less bad than false negatives (although, people seem to demand zero error in either direction, and that’s just silly).

Frankly, it sucks. Every input image I tried had over 90% artificial rating.

If you don’t mind, I’d be interested to see the images you used. The broad validation tests I’ve done suggest 80-90% accuracy in general, but there are some specific categories (anime, for example) on which it performs kinda poorly. If your test samples have something in common it would be good to know so I can work on a fix.