Tech behemoth OpenAI has touted its artificial intelligence-powered transcription tool Whisper as having near “human level robustness and accuracy.”

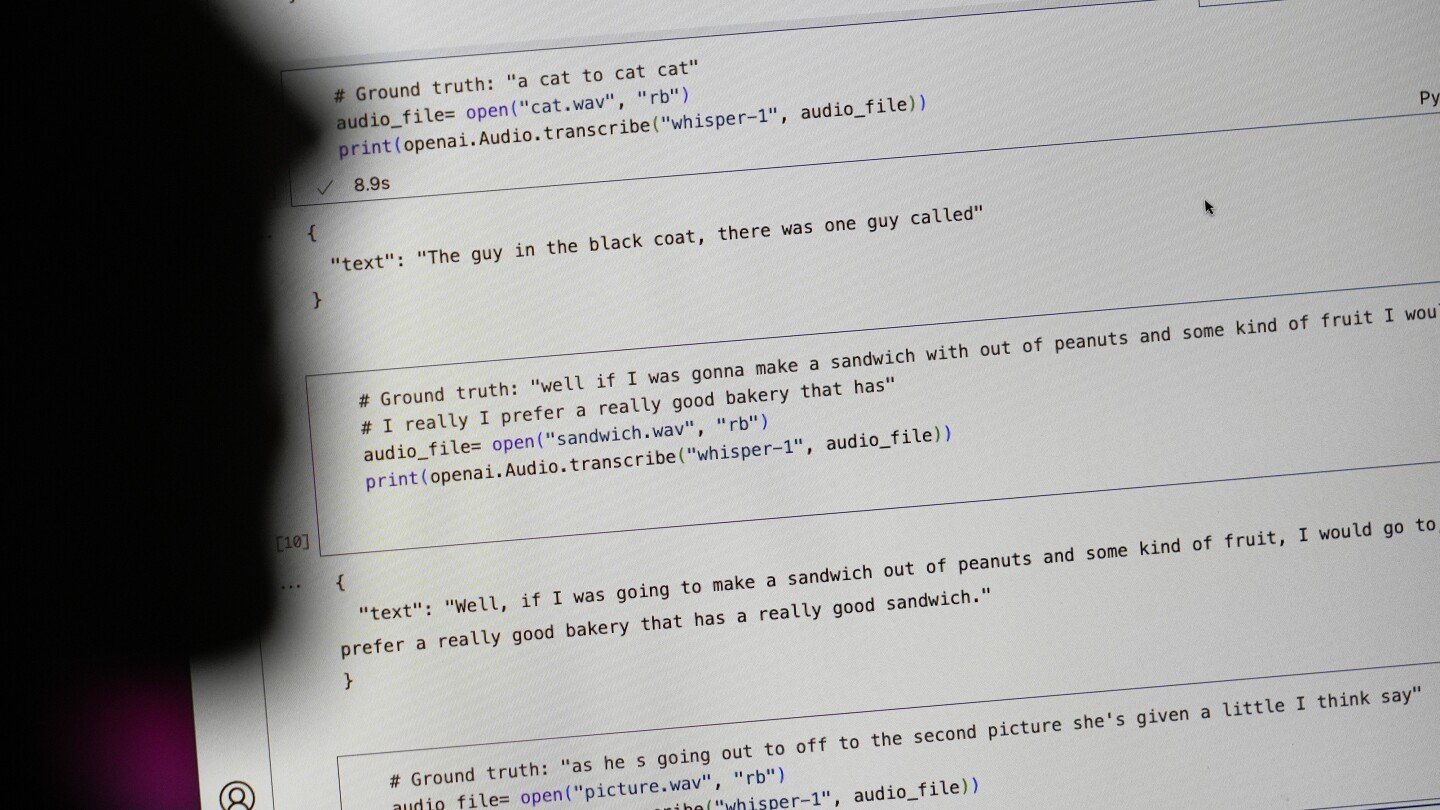

But Whisper has a major flaw: It is prone to making up chunks of text or even entire sentences, according to interviews with more than a dozen software engineers, developers and academic researchers. Those experts said some of the invented text — known in the industry as hallucinations — can include racial commentary, violent rhetoric and even imagined medical treatments.

Experts said that such fabrications are problematic because Whisper is being used in a slew of industries worldwide to translate and transcribe interviews, generate text in popular consumer technologies and create subtitles for videos.

More concerning, they said, is a rush by medical centers to utilize Whisper-based tools to transcribe patients’ consultations with doctors, despite OpenAI’ s warnings that the tool should not be used in “high-risk domains.”

Why is generative AI even needed for audio transcription? We’ve had decent voice recognition tools for years even on cheap consumer grade stuff.

We also used to have decent web searches!

Because with normal algorithms you have someone to blame.

AI is a trick to hide when you steer the results the way you want.

Whisper really is a lot better when it works, and it’s free. The problem is that it refuses to produce gibberish or give up when it doesn’t work. You’ll always need an editor.

The toaster oven I just invented works much better than a traditional one. It reheats French fries perfectly, you can dehydrate in it, makes succulent roasted chicken, and about 2.5% of the time it burns down your house. You’ll always need to keep an eye on it to make sure that doesn’t happen. Remember though, much better than a traditional one.

Can I try the chicken before I make a decision?

You need an editor for traditional transcription tools too :) and it’s A LOT more work. They don’t even do punctuation or names.

This definition of “better” feels like claiming that a Beeper that’s constantly hooked to power is the perfect alarm because it warns you every time someone is trying to break in - while entirely ignoring that it is just constantly blaring.

I use it for generating subtitles. It figures out context, it ignores stuttering, it does punctuation etc. It’s really is just better. With clean audio it transcribes like a human does.

It does better than other techniques with dirty audio, but when it fails it fails weird, which is the big issue here.

No, we really haven’t had on-device voice recognition that meets any definition of “decent”. Anything reasonable phones out to “the cloud” for decent voice recognition.

So? I’d rather have my software talk to a server than be downright wrong just so another business can climb onto the AI bandwagon.

You can’t do that with personal information like the ones doctors needs transcribed. It has to be local.

Reality is more nuanced than this. You can absolutely be HIPAA compliant while using “cloud” servers as long as they are sufficiently isolated and secured. The requirements are definitely insufficient to protect your data from a Motivated State Actor™ but they are good enough to keep your data away from an abusive family member or crazy ex. I have worked on systems that handle patient data as well as other systems with restrictions I can’t discuss and I can assure you patient data is much easier to move around and handle compared to state secrets.

Edit: funny story, I just got back from a doctor appointment where they asked me to sign a consent form for recording and transcription of the visit by a computer system. It’s definitely happening, in practice.

Because AI!